How Jio Hotstar Scales Live Streaming to Millions During IPL

Introduction

When millions of people open their app at the exact same moment to watch an IPL final, something extraordinary has to happen behind the scenes.

It’s not just “video streaming.”

It’s a distributed systems problem at massive scale.

During peak IPL matches, platforms like Jio Hotstar have reportedly handled tens of millions of concurrent viewers. Delivering live video to that many users — with low latency, no buffering, and consistent video quality — is an engineering challenge that goes far beyond running a few backend servers.

This is not a single-machine problem.

It is a globally distributed architecture problem.

In this article, we’ll break down how a large-scale live streaming system works — from video ingestion and transcoding to CDN distribution, scaling strategies, and failure handling.

The Real Problem: Scale

Let’s assume:

20 million concurrent users

Average video bitrate: 6 Mbps

That means the platform must deliver:

20,000,000 × 6 Mbps = 120 Terabits per second

No single data center in the world can push that directly from origin servers.

So the core idea becomes:

Never let your main servers handle all the traffic.

Instead, you distribute the load intelligently.

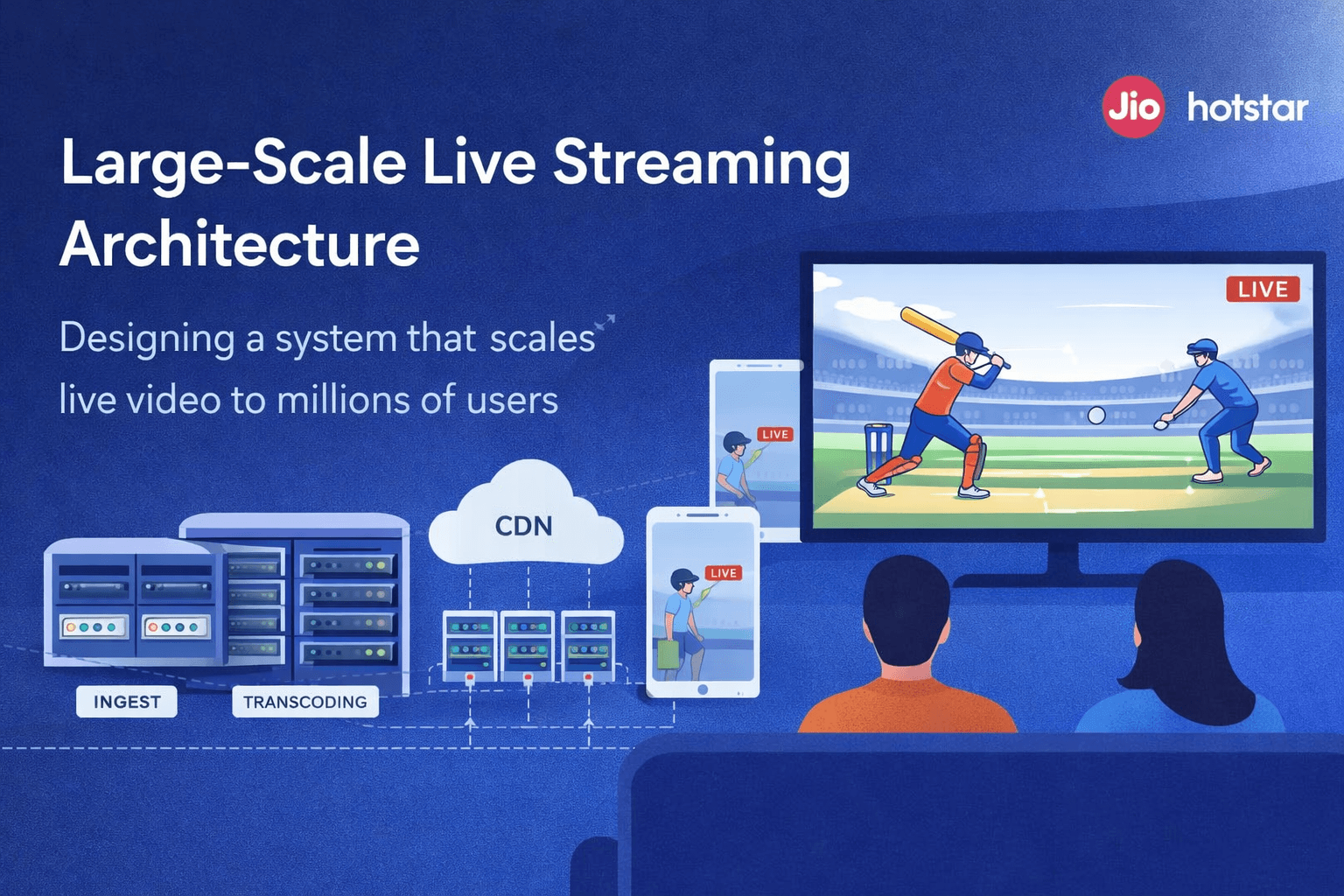

To handle this scale, the system is designed as a multi-layered distributed pipeline.

Here’s a simplified view of how live video flows from the stadium to your device:

Let’s break down each layer and understand why it exists.

Step 1: Live Feed Enters the System (Ingest Layer)

Everything starts with the live broadcast feed from stadium cameras.

This raw video feed is:

High bitrate

Continuous

Sensitive to interruptions

The ingest servers act as the entry gate. Their job is to:

Receive the raw stream

Validate it

Forward it reliably to the processing pipeline

In production, there are multiple ingest endpoints. If one fails, traffic shifts automatically to another. Live sports cannot afford downtime.

Step 2: Transcoding Into Multiple Qualities

Not every user has the same internet speed.

So the system converts the original feed into multiple resolutions:

180p

360p

720p

1080p

4K

This process is called transcoding.

Why is this important?

Because of Adaptive Bitrate Streaming (ABR).

If your internet slows down, the player automatically switches to a lower resolution. If it improves, it switches back up. This prevents buffering and keeps playback smooth.

This transcoding stage is compute-heavy and often GPU-accelerated. It is also horizontally scalable — more viewers means more encoding capacity is added.

Step 3: Breaking Video Into Small Chunks

Instead of sending a continuous stream, the video is broken into small 2–6 second segments.

For example:

segment1.ts

segment2.ts

segment3.ts

Along with these segments, a playlist file is generated (HLS or DASH format). The playlist tells the player which segments to download and in what order.

Why chunk the video?

Makes quality switching easier

Enables efficient caching

Allows recovery if one segment fails

Supports parallel downloads

Technically, you are not “streaming” in real-time.

You are rapidly downloading small encrypted video files.

Step 4: Encryption and Security

Live sports content is extremely valuable.

Before distribution, every video segment is encrypted.

When a user presses “Watch Now”:

The app authenticates the user

It fetches a decryption key securely from backend servers

Segments are decrypted on the device during playback

Without the key, the video files are useless.

This protects against piracy and unauthorized distribution.

Step 5: Origin Server (The Master Copy)

The origin server stores the master version of all video chunks.

But here’s the important part:

It does not serve millions of users directly.

If it did, it would collapse under load.

Instead, it only serves regional distribution nodes. Think of it as the source of truth, not the delivery engine.

Step 6: Regional CDN Distribution

To reduce latency and backbone traffic, the content is pushed to regional CDN hubs.

For example:

North India

South India

Europe

Southeast Asia

These regional CDNs cache content closer to users.

This dramatically reduces:

Latency

Network congestion

Origin load

Step 7: Edge CDN — Closest to the User

When you hit play, your app connects to the nearest edge node.

This node:

Already has cached segments

Delivers them with very low latency

Handles massive parallel requests

The key metric here is cache hit ratio.

Higher cache hit ratio =

Less load on origin =

Lower infrastructure cost.

At IPL scale, even a small drop in cache efficiency can mean massive cost spikes.

What Happens When Millions Join at Once?

Traffic spikes are expected during live matches.

To handle this:

Encoding clusters auto-scale

CDNs are pre-warmed with popular streams

Load balancers distribute traffic

Multi-CDN strategies may be used

If one CDN fails, traffic can shift to another.

This prevents total outage during peak events.

Handling Failures

At this scale, failures are normal.

Examples:

Encoder crashes

Region-level outages

CDN node failures

Key management service downtime

Production systems prepare for these:

Redundant encoders

Multi-region deployment

Health checks and failover routing

Monitoring and alerting systems

Reliability is engineered, not assumed.

The Bigger Engineering Lessons

Live streaming at IPL scale is not about video players.

It’s about:

Distributed caching

Horizontal scalability

Encryption and security

Traffic engineering

Failure tolerance

Cost optimization

Every architectural decision is a tradeoff between:

Latency

Quality

Cost

Availability

That’s what makes it a true system design challenge.

Final Thoughts

What looks simple — tapping “Watch Now” — is actually a globally distributed, multi-layered infrastructure working in real time.

The brilliance of systems like this is that users never see the complexity.

They only see smooth playback.

And that is the mark of good backend engineering.